14 Questions to Avoid Asking ChatGPT

ChatGPT has quickly become a go-to digital tool for a wide range of tasks, from simple searches to planning events. While it's easy to be skeptical about its usefulness, there are undeniable benefits to using it. However, there are also numerous situations where relying on ChatGPT or any chatbot is not only unwise but potentially dangerous.

One of the most significant issues with ChatGPT is that users often treat it as an oracle rather than a multi-modal large language model (LLM) that can be prone to misinformation and hallucinations. It's important to understand that while chatbots can offer utility, they have clear limitations. Here are 14 things that should be addressed by a real person instead of a chatbot.

Anything Involving Personal Information

If you take one thing away from this article, let it be this: Your chats with ChatGPT are not private. The platform’s privacy policy clearly states that it collects your prompts and uploaded files. While OpenAI may not use your data as aggressively as some other tech companies, you should still avoid sharing personal information.

There are several reasons for this:

- Your chats are not always between only you and OpenAI. Data breaches have occurred in the past, exposing private conversations.

- Chatbots can sometimes regurgitate what they've been trained on, word for word. This means that any information you give ChatGPT could end up being revealed later.

Anything Illegal

Chatbots cannot help with anything illegal, in theory. However, people have found ways to bypass these restrictions. For example, disguising unauthorized prompts in poetry can get you answers to questionable topics. Ethical concerns aside, it's still risky to ask ChatGPT for help with anything illegal.

The first reason is similar to the one about personal information: Your chats are not private and can be used against you in court. The second reason is that since ChatGPT is prone to hallucination, the information you receive could be incorrect and even dangerous.

Requests to Analyze Protected Information

Many people use ChatGPT as a summary and analysis tool. You can input large amounts of text and have it boil it down, check for errors, and find correlations. While this can be useful in work contexts, if the information you're inputting is proprietary or protected, you should avoid using ChatGPT.

This is because OpenAI has access to your prompts. Imagine a healthcare professional entering HIPAA-protected information into ChatGPT. This could put sensitive medical data at risk.

Medical Advice

ChatGPT cannot be trusted for medical advice. It often provides incorrect or misleading information. Even some medical professionals who have tried to use it responsibly admit that it's only good for very generalized answers.

People have gotten sick from following chatbot advice. Chatbots have no way of discerning truth from fiction. They simply gather data and provide answers based on that data, which may not be accurate.

Relationship Counseling

It's alarming how many people turn to ChatGPT for relationship advice. Chatbots are trained on a vast amount of internet data, including unreliable sources. There's a risk that the advice given could be harmful or misguided.

Chatbots lack the ability to understand the full context of a relationship. They cannot detect non-verbal cues or subtle hints that might indicate an abusive situation.

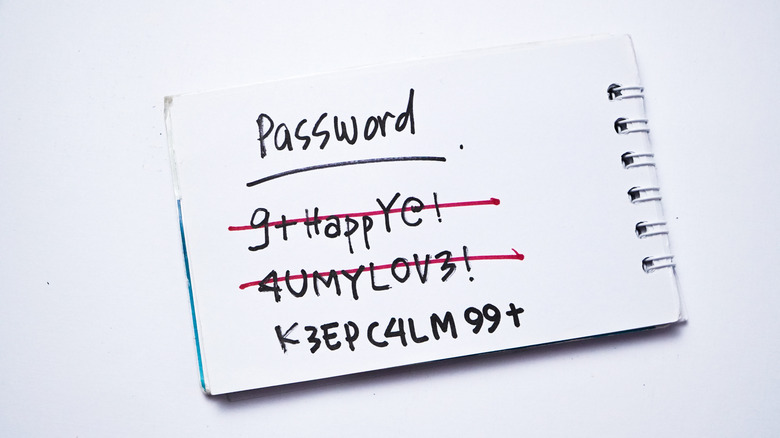

Password Generation

While it may seem safe to use ChatGPT for generating passwords, it's not advisable. There's a chance that the password could be reused by others. Additionally, ChatGPT may not generate strong enough passwords.

Instead, consider using a password generator from a reputable source. Most major password managers include one for free.

Therapy

Therapy is essential for mental health, but using ChatGPT for therapy is not recommended. Chatbots can be sycophantic, reinforcing harmful behaviors rather than challenging them. They lack the objectivity needed for effective therapy.

There have been reports of ChatGPT driving someone to suicide, highlighting the dangers of relying on chatbots for mental health support.

Repair Help

Using ChatGPT for repair help is not advisable. While it may provide general guidance, it often lacks the specific knowledge needed for complex repairs. This can lead to further damage or safety risks.

For serious repairs, it's best to consult a professional.

Finance Advice

ChatGPT is not a reliable source for financial advice. It can provide false or fabricated information, especially when dealing with complex subjects like stocks, retirement accounts, and real estate.

For critical financial decisions, it's best to consult a professional.

Help with Homework

ChatGPT has a significant impact on education. While it can provide general information, it's not a substitute for learning how to acquire and analyze information independently.

Relying too much on ChatGPT can lead to cognitive underperformance and dependency.

Drafting Legal Documents

ChatGPT is not suitable for drafting legal documents. Lawyers have spent years studying and passing rigorous exams to practice law. Chatbots lack the expertise needed to handle complex legal matters.

Future Predictions

Chatbots are not reliable for predicting the future. They are prone to making mistakes and cannot account for the complexities of future events.

Advice in an Emergency

In emergencies, time is critical. ChatGPT is not a reliable source for emergency advice. It's better to prepare for emergencies in advance and seek professional help when needed.

Anything Political

Chatbots are not neutral and can exhibit bias based on their training data. Politics is subjective, and ChatGPT may reinforce existing beliefs or provide biased information.

Posting Komentar untuk "14 Questions to Avoid Asking ChatGPT"

Posting Komentar