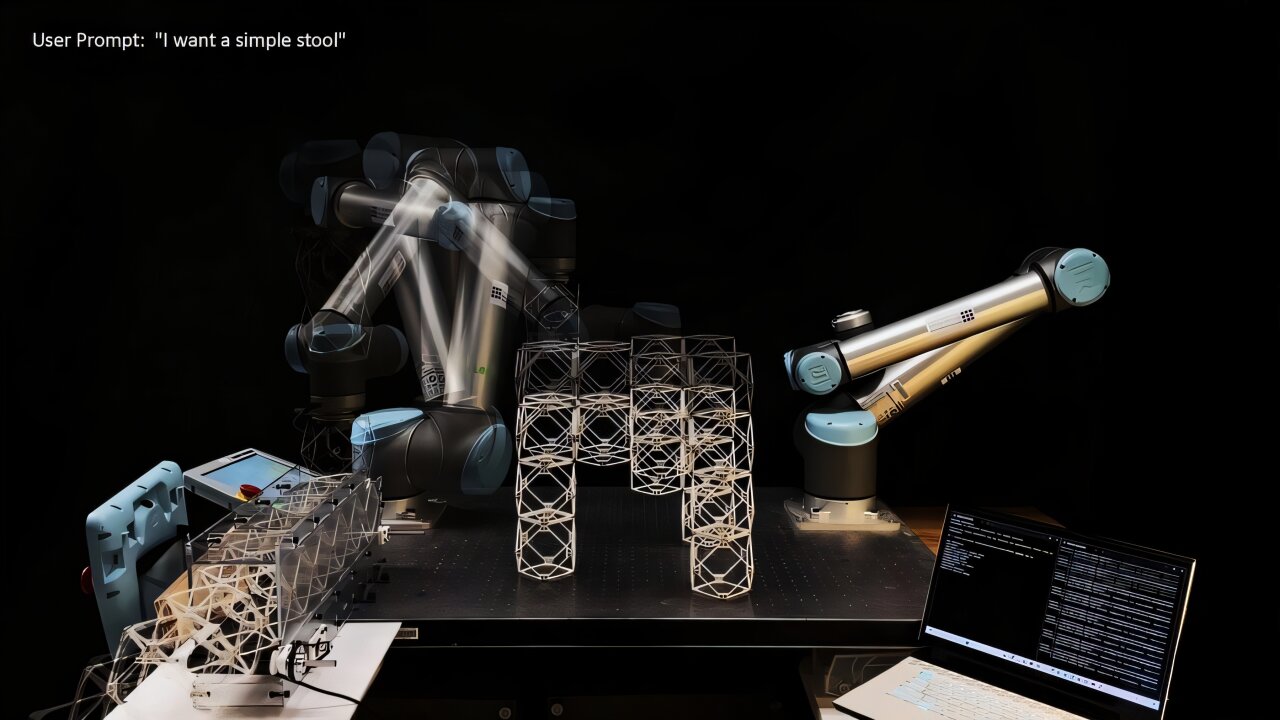

AI-powered robotic system brings speech to life as physical objects on demand

Generative AI and robotics are rapidly advancing, bringing us closer to a future where we can request an object and have it created in just minutes. A groundbreaking development from the Massachusetts Institute of Technology (MIT) is the speech-to-reality system, an AI-driven workflow that enables users to speak objects into existence. This innovative technology allows a robotic arm to construct items such as furniture using modular components, with the entire process taking as little as five minutes.

The speech-to-reality system operates by receiving spoken input from a user, such as "I want a simple stool." The system then uses this input to generate a 3D model of the object, which is subsequently broken down into assembly components. These components are then used by the robotic arm to build the physical object. To date, the researchers have successfully created a variety of items, including stools, shelves, chairs, small tables, and even decorative pieces like a dog statue.

According to Alexander Htet Kyaw, a graduate student at MIT and Morningside Academy for Design (MAD) fellow, this project represents a significant leap forward in combining natural language processing, 3D generative AI, and robotic assembly. "These are rapidly advancing areas of research that haven't been brought together before in a way that you can actually make physical objects just from a simple speech prompt," he explains.

The concept of the speech-to-reality system originated when Kyaw took Professor Neil Gershenfeld's course, "How to Make Almost Anything." While working on the project at the MIT Center for Bits and Atoms (CBA), directed by Gershenfeld, he collaborated with other graduate students, including Se Hwan Jeon from the Department of Mechanical Engineering and Miana Smith from CBA.

How the speech-to-reality system works

The speech-to-reality system begins with speech recognition, which processes the user's request using a large language model. This is followed by 3D generative AI, which creates a digital mesh representation of the object. A voxelization algorithm then breaks down the 3D mesh into assembly components.

Next, geometric processing modifies the AI-generated assembly to account for real-world fabrication and physical constraints, such as the number of components, overhangs, and connectivity of the geometry. This is followed by the creation of a feasible assembly sequence and automated path planning for the robotic arm to assemble the physical object from the user's prompt.

By leveraging natural language, the system makes design and manufacturing more accessible to people without expertise in 3D modeling or robotic programming. Unlike 3D printing, which can take hours or days, this system builds within minutes.

"This project is an interface between humans, AI, and robots to co-create the world around us," Kyaw says. "Imagine a scenario where you say 'I want a chair,' and within five minutes a physical chair materializes in front of you."

Future improvements and broader vision

The team has immediate plans to improve the weight-bearing capability of the furniture by changing the means of connecting the cubes from magnets to more robust connections. "We've also developed pipelines for converting voxel structures into feasible assembly sequences for small, distributed mobile robots, which could help translate this work to structures at any size scale," Smith says.

The use of modular components aims to eliminate waste by allowing objects to be disassembled and reassembled into different forms. For example, a sofa could be transformed into a bed when no longer needed.

Kyaw, who has experience using gesture recognition and augmented reality to interact with robots in the fabrication process, is currently working on incorporating both speech and gestural control into the speech-to-reality system.

Drawing inspiration from the replicator in the "Star Trek" franchise and the robots in the animated film "Big Hero 6," Kyaw envisions a future where people can create physical objects quickly, accessibly, and sustainably. "I'm working toward a future where the very essence of matter is truly in your control. One where reality can be generated on demand," he says.

The team presented their paper, "Speech to Reality: On-Demand Production using Natural Language, 3D Generative AI, and Discrete Robotic Assembly," at the Association for Computing Machinery (ACM) Symposium on Computational Fabrication (SCF '25) held at MIT on Nov. 21.

Posting Komentar untuk "AI-powered robotic system brings speech to life as physical objects on demand"

Posting Komentar