I Wish Apple Made This Mac Tool – It’s Better Than Spotlight and You Should Try It

A New Spotlight Experience with Vector

One of the most significant updates that came with macOS Tahoe was a turbocharged Spotlight. Apple addressed several minor issues and introduced new features that cater to power users. Naturally, there were some changes that didn’t sit well with everyone.

For example, the removal of the classic LaunchPad generated a lot of backlash and led to the development of various apps that recreate the experience. Similarly, the upgrades to Spotlight drew inspiration from productivity tools like Raycast, and these weren't always as well-received as their third-party counterparts.

Personally, I find the new Spotlight experience a bit overwhelming and somewhat lacking in functionality. That's where Vector comes into play. It's a minimalist Spotlight replacement developed by Ethan Lipnick, a former Apple engineer, and it offers some really impressive AI-driven conveniences.

What Does Vector Do?

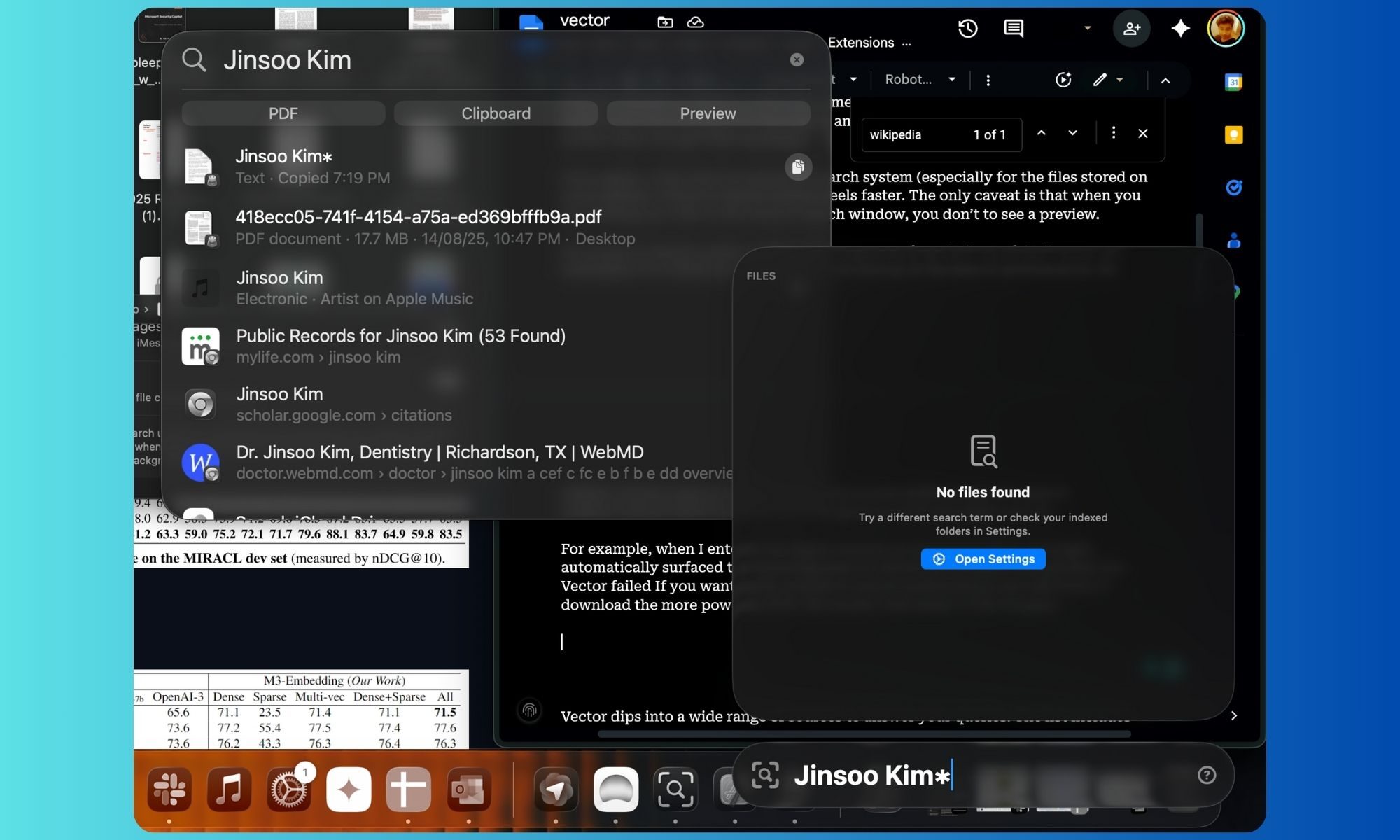

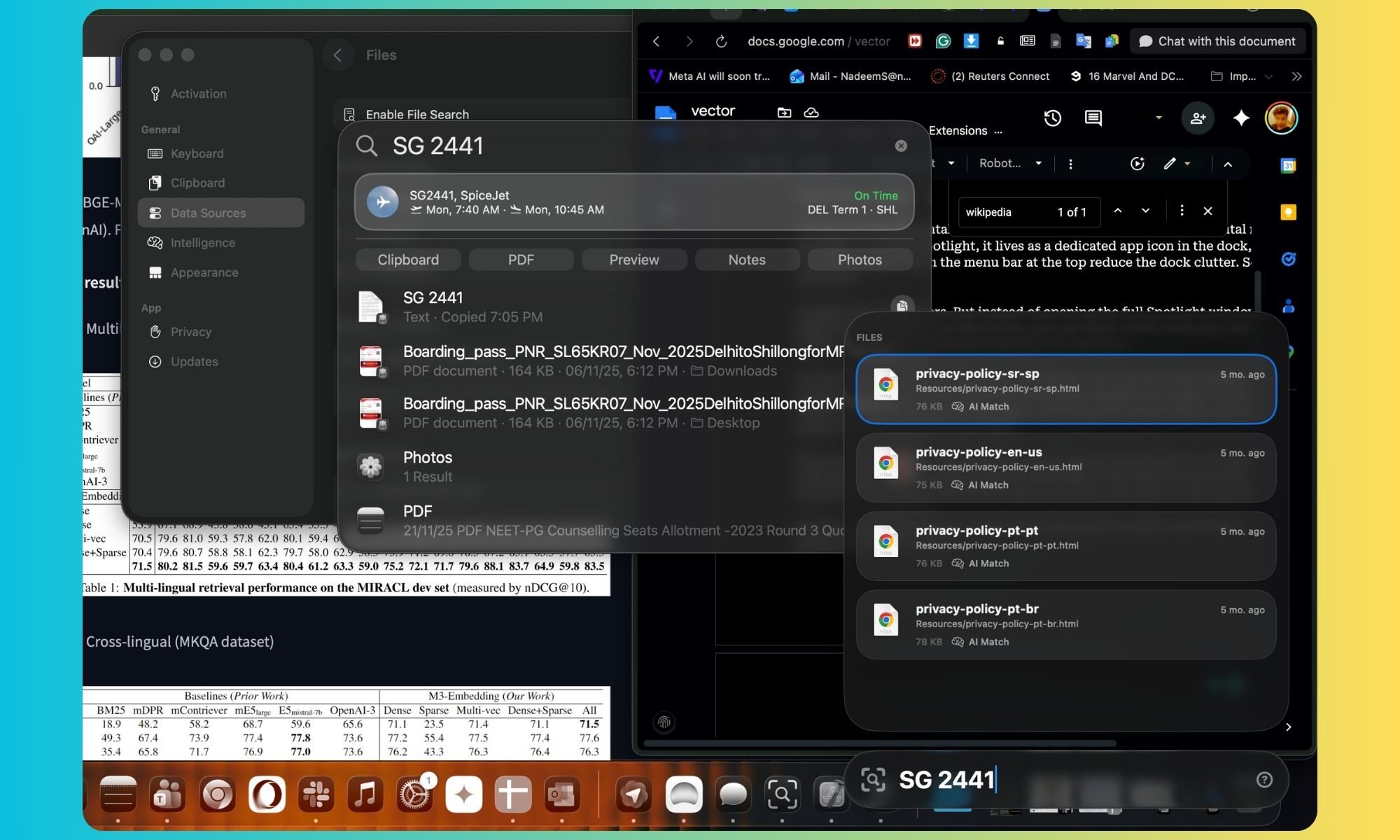

At its core, Vector aims to replace the broad role of Spotlight. Like Spotlight, it has a dedicated app icon in the dock, but you can also access it from the menu bar to reduce dock clutter. So, what can it do?

Launching an app is just the beginning. Instead of opening the full Spotlight window that takes up central space on the screen, you can launch Vector from any corner of the screen to keep things tidy and visually non-intrusive.

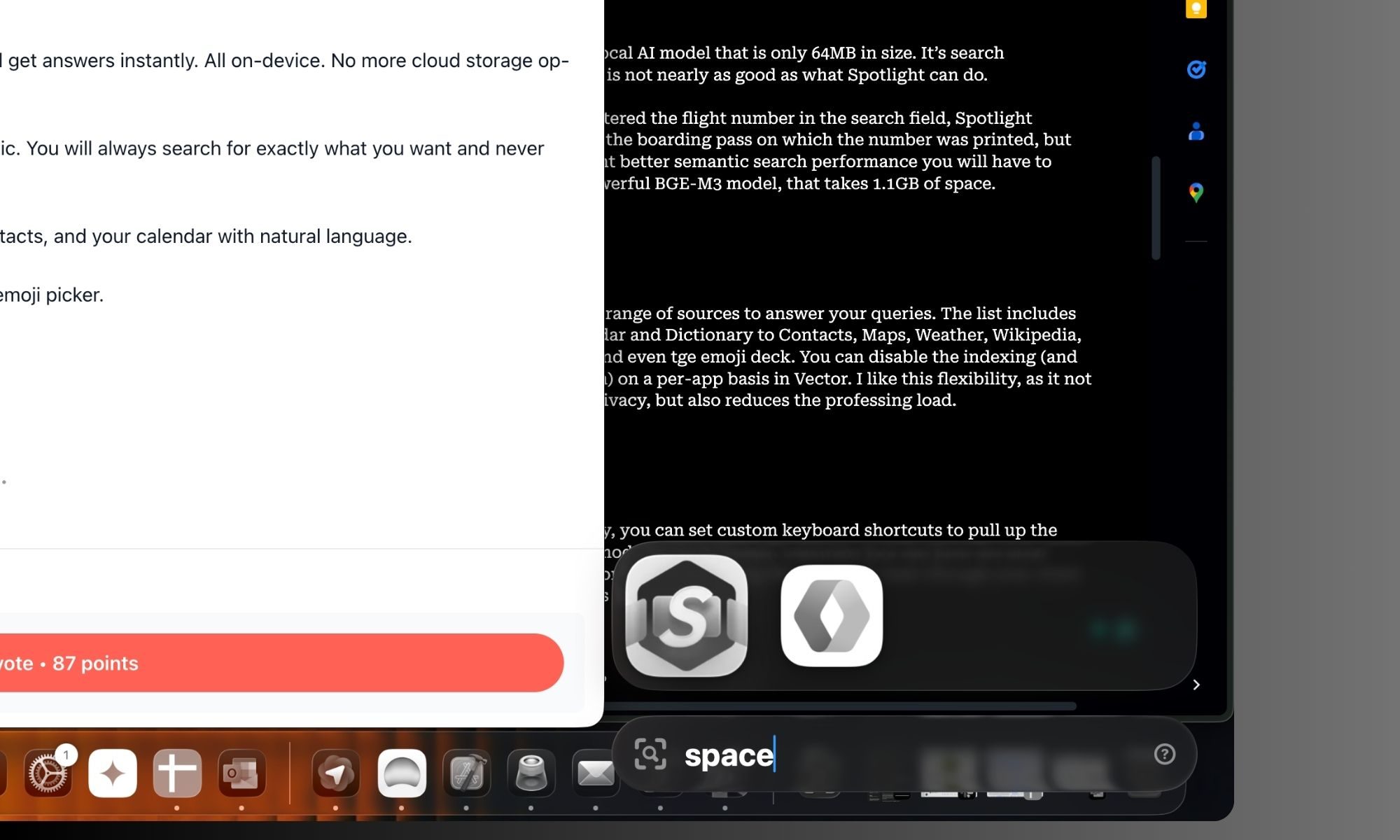

Flexibility is key. You can set custom keyboard shortcuts to bring up the main panel, clipboard mode, or emoji picker. Likewise, you can choose the most convenient keyboard combination for starting a file search or browsing your chats in the built-in Messages app.

Vector not only looks great but also has a clean design and snappy animations that give it an Apple-like feel. It feels faster than interacting with Spotlight, and its search performance is nearly as fast as what you get with Spotlight.

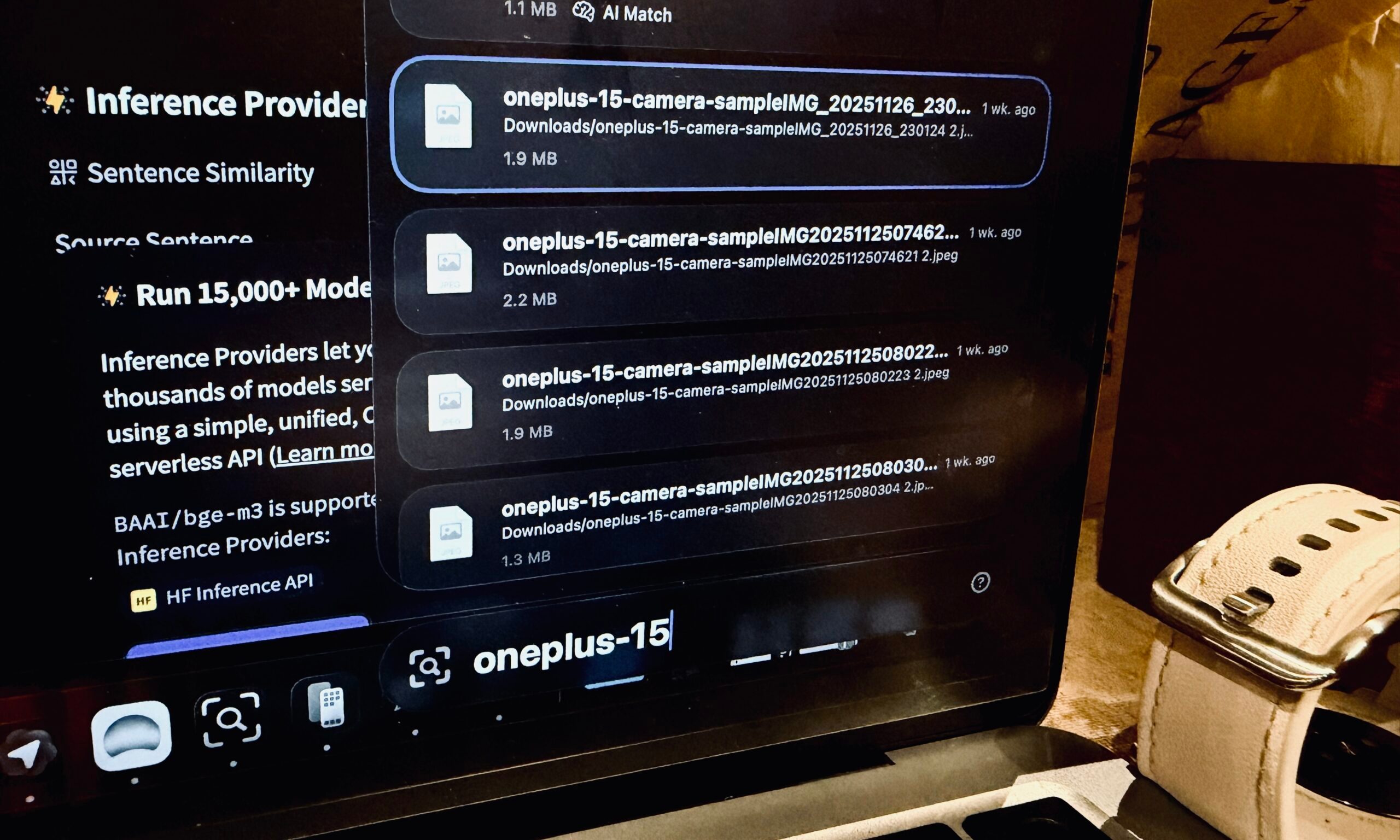

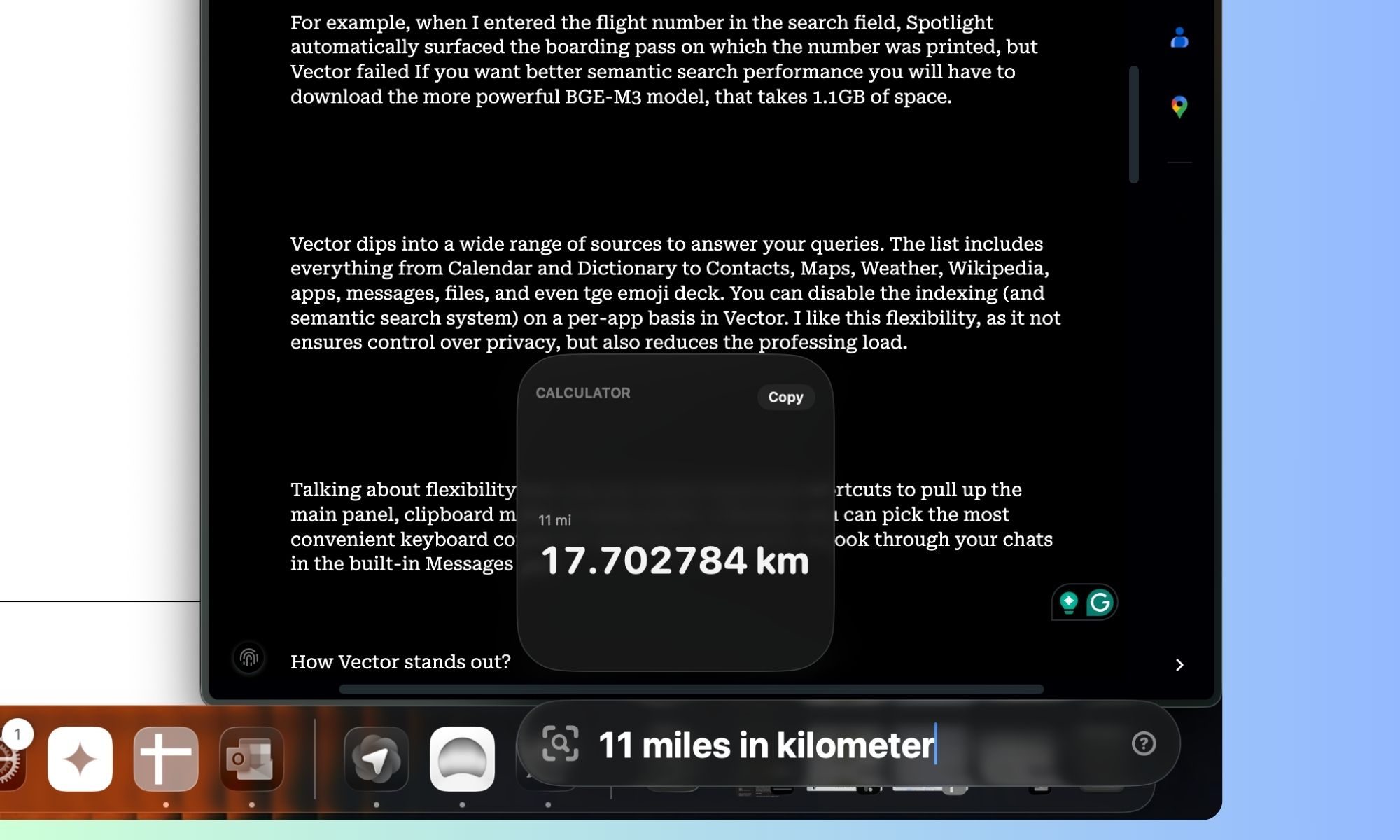

I noticed that the semantic search system (especially for files stored on your system) produces faster results. The only downside is that when searching for a file in the Vector search window, you don’t see a preview. This can be problematic if you have files named similarly, like ABC-1 and ABC-2. Another minor issue is the search performance. By default, Vector uses a local AI model that is only 64MB in size, and its search performance isn't as good as Spotlight’s.

For better semantic search output, you need to download the more powerful BGE-M3 model, which takes up 1.1GB of space. For instance, when I entered a flight number in the search field, Spotlight automatically found the boarding pass with the number, but Vector failed.

Lots of Hits, a Few Misses

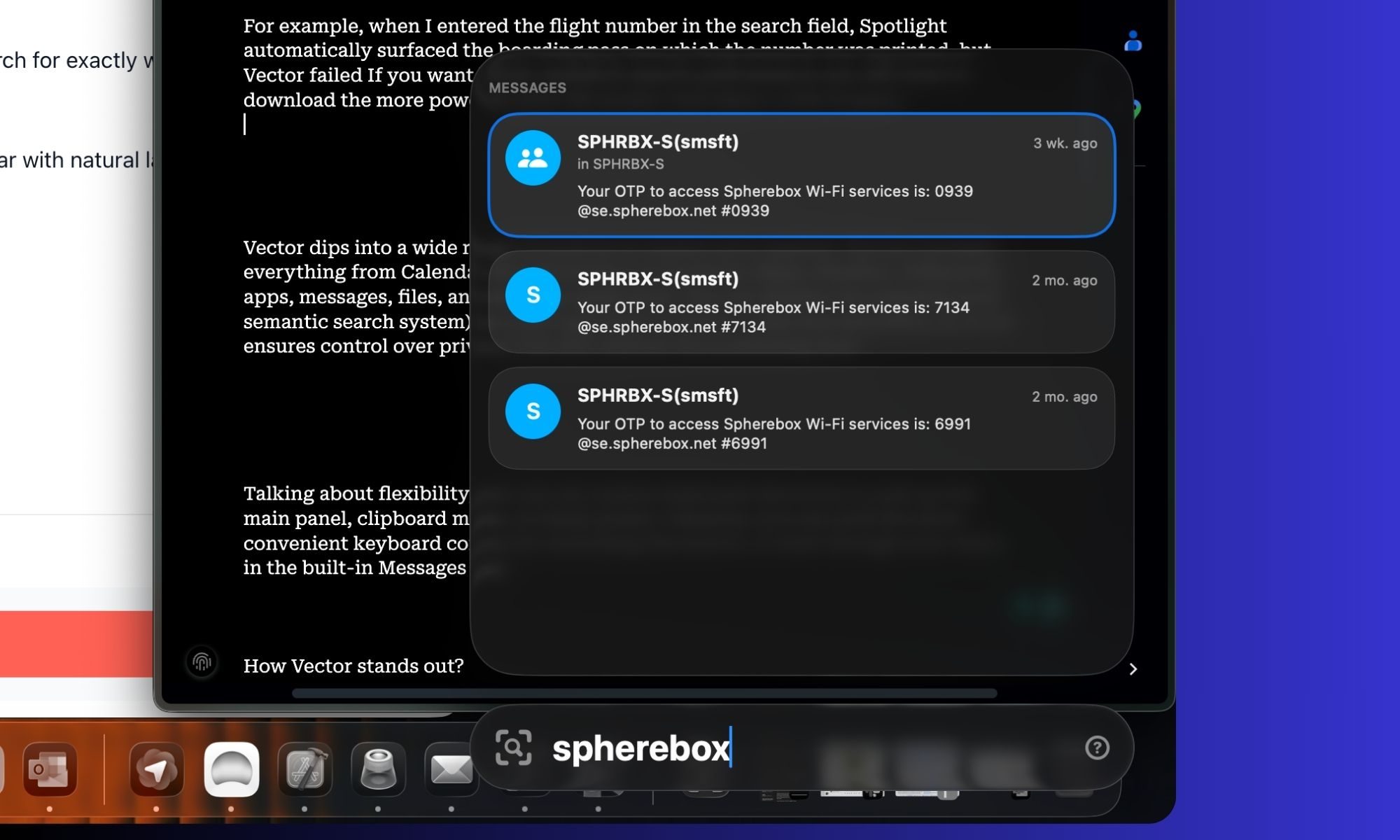

The indexing process is quite opaque, even though it appears to work fine with the files stored on the system. For example, I couldn’t reference my most recent chats with friends and family members on the same date. However, random service messages and code returned valid results when searched within the Message directory.

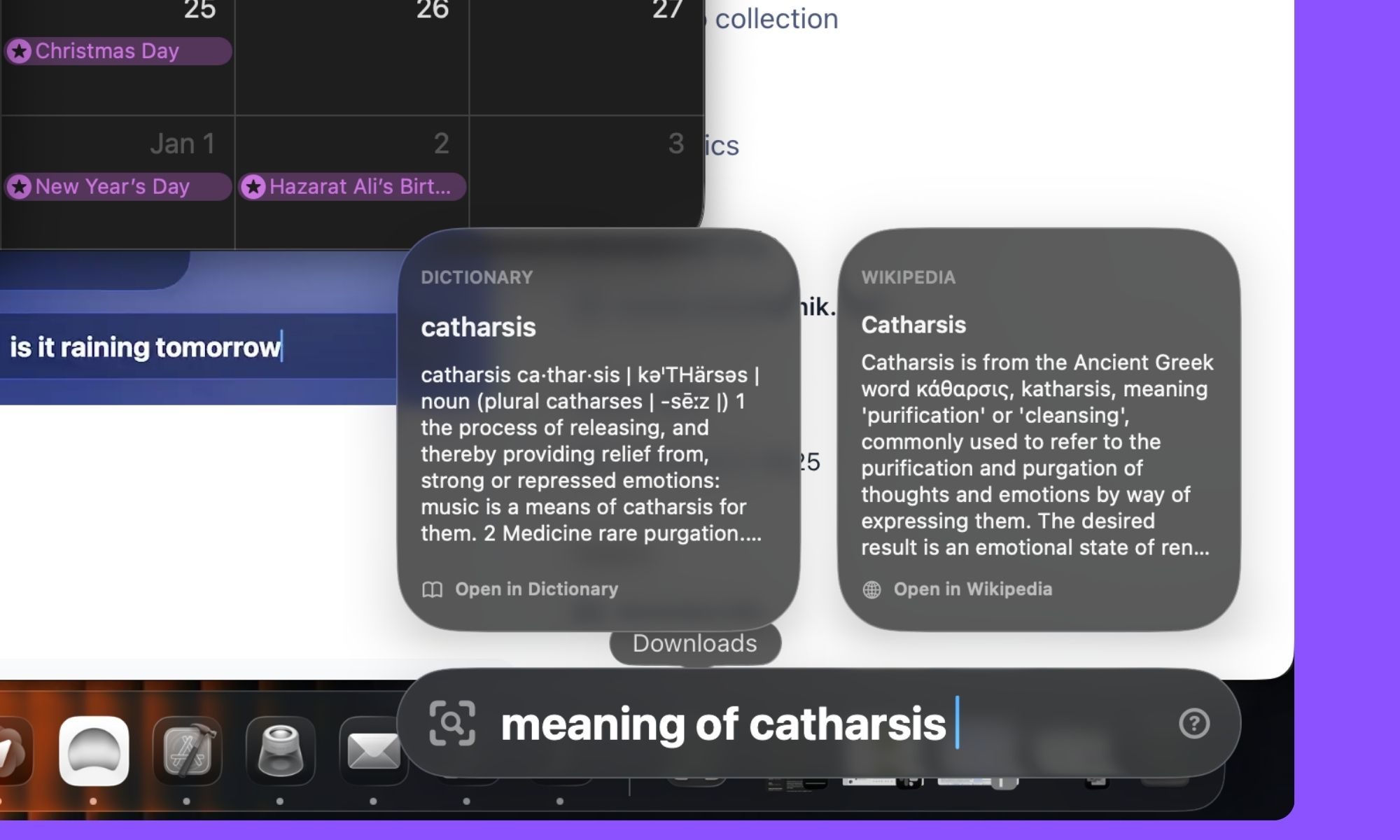

The semantic understanding is hit or miss. When I looked up “Definition of catharsis,” I got results from the Dictionary app and Wikipedia. But when I tried a contextual search for content within PDF files, Vector failed.

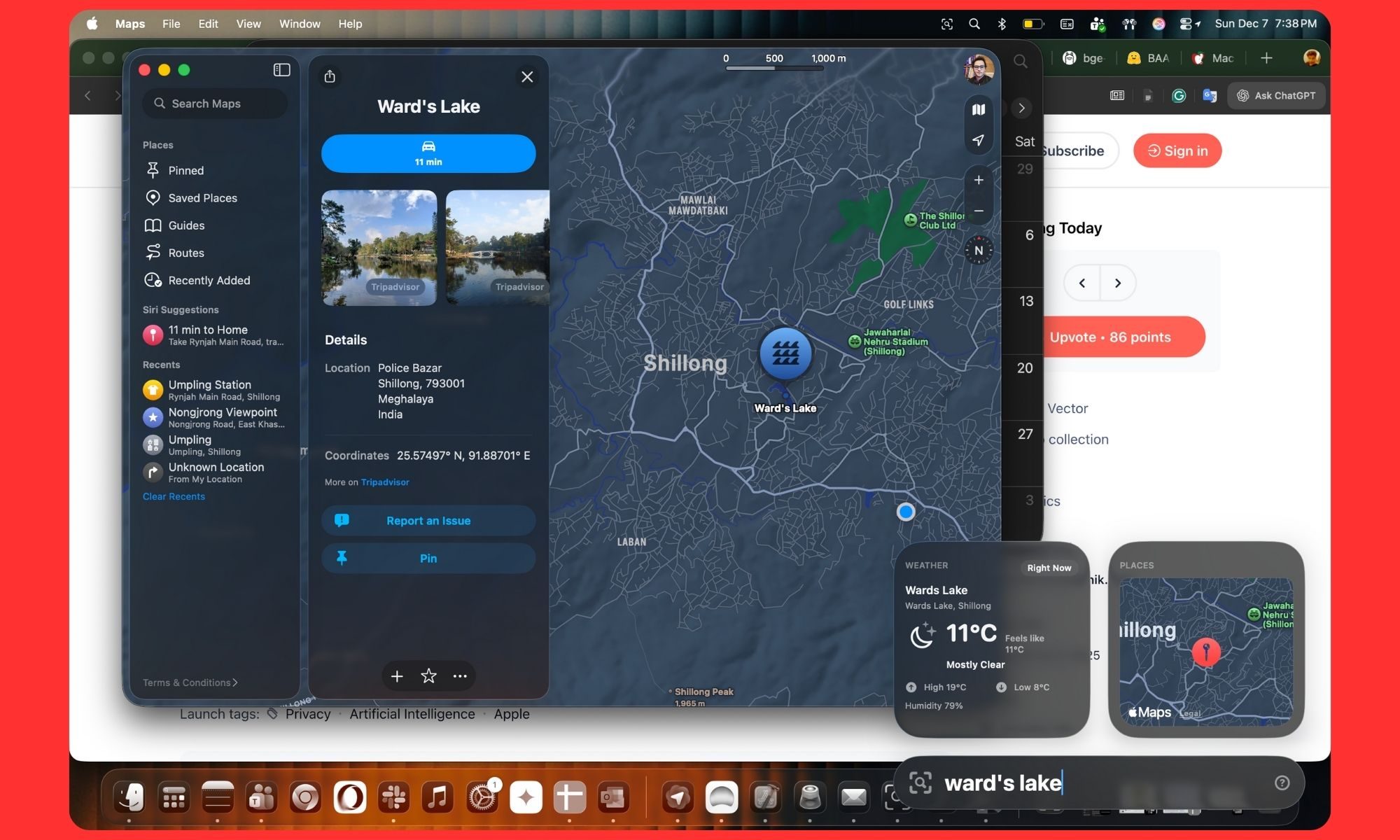

It worked well with pulling up forecast information from the weather app but struggled with details from the Calendar app, even with straightforward queries. Finding entries that should lead to a Maps view was reliable, but as soon as you dig into natural language queries like “distance between Umpling and Laitumkhrah,” it stuttered.

Vector pulls from a wide range of sources to answer your queries. The list includes everything from Calendar and Dictionary to Contacts, Maps, Weather, Wikipedia, apps, messages, files, and even the emoji deck. You can disable the indexing (and semantic search system) on a per-app basis in Vector. I appreciate this flexibility, as it ensures control over privacy and reduces processing load.

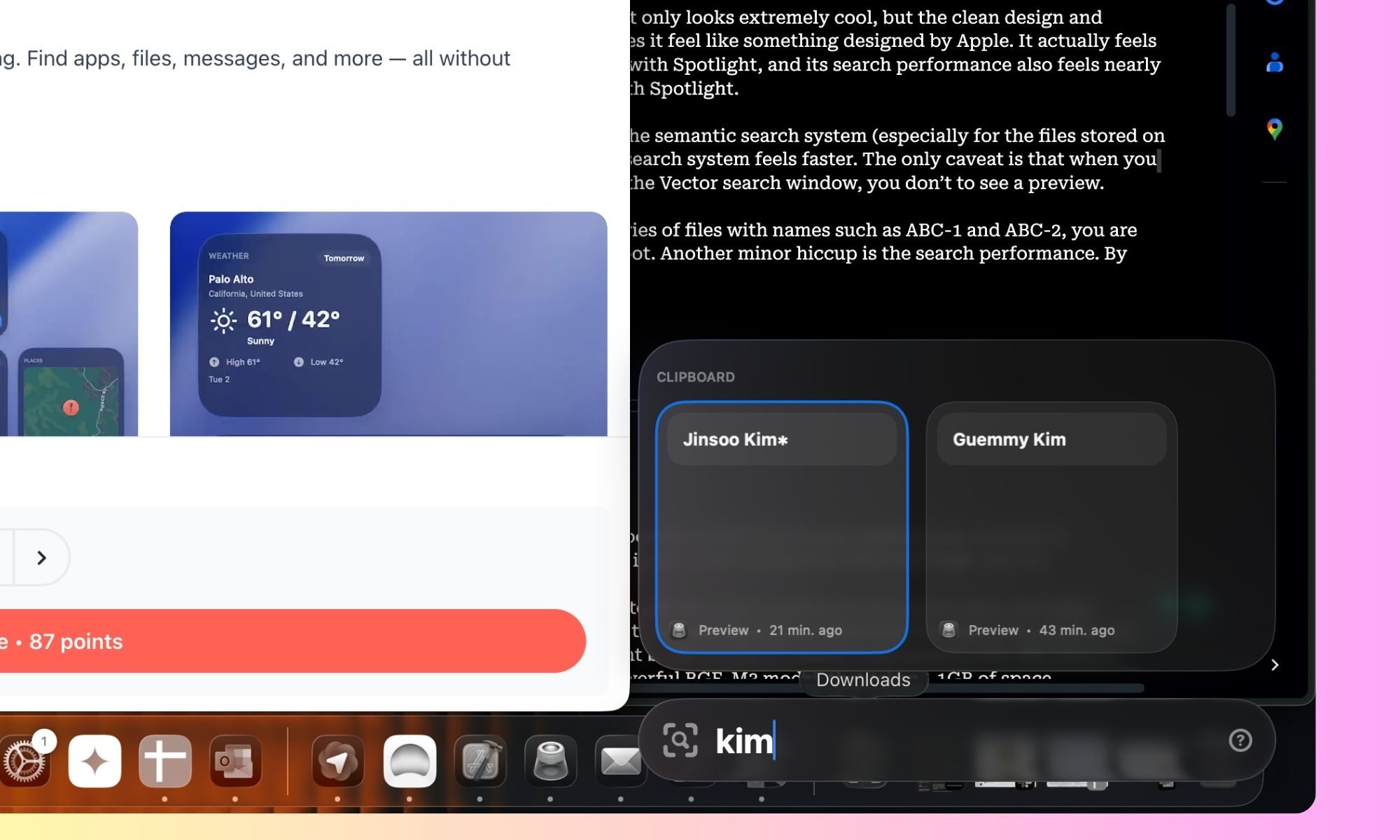

On the positive side, I absolutely love the clipboard system. When you summon it, you get a sliding carousel of cards that feels buttery smooth to slide through. Each card also shows the app from which the content was copied, along with the date and/or approximate time.

Vector offers plenty of flexibility in how you interact with the app. You can use it solely as an app launcher, deploy it as a system-wide semantic search system, or simply explore its built-in clipboard features. Additionally, you can select between six positions where the Vector window opens.

I kept it anchored to the lower right corner, as it looks neat and doesn’t disrupt the view of foreground app windows. The clipboard system also lets you set an auto-delete protocol ranging from a day to a full week or month. You can choose to keep everything in the clipboard directory forever without worrying about security, as all the copied-and-pasted content is processed and saved only on the device.

Reading the Room (for the Silicon Inside)

I have repeatedly mentioned that Apple’s silicon is in a league of its own. Whether it's Macs or iPhones, the balance of raw performance and efficiency is miles ahead. However, Apple doesn’t let you tinker with the performance output.

On a Windows machine, you get native utilities like Armory Create (on Asus ROG machines) and third-party apps that offer granular control over everything from GPU frequency to fan speed. Even phones such as the Red Magic 10S Pro and OnePlus 15 let you tap into the real potential by adjusting the performance presets.

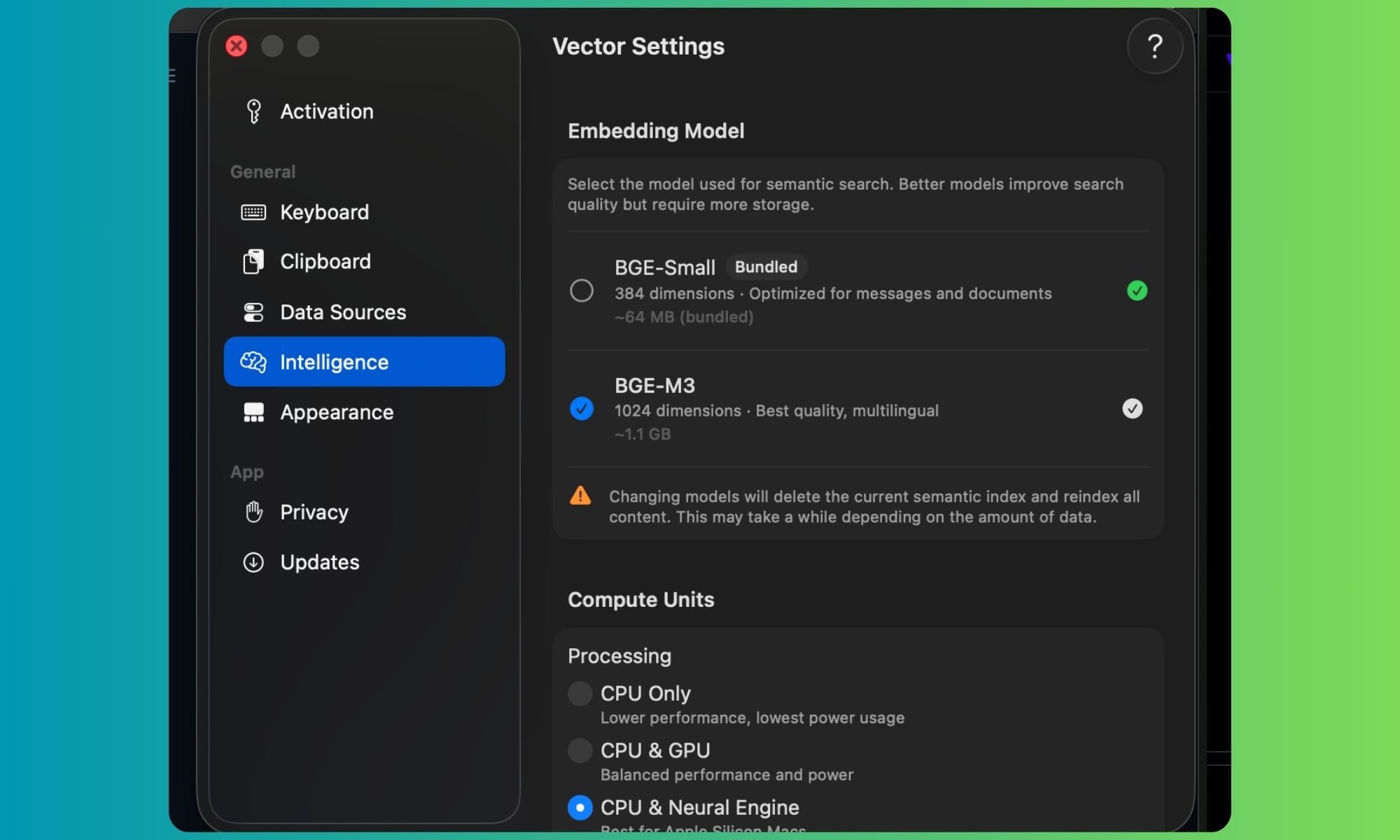

Vector doesn’t fully fix that situation for your Mac, but as long as you’re running the app, you can adjust its performance output. To start with, you can choose to run the AI-powered functionalities in Vector solely on the CPU. If you want better performance, you can combine the CPU and the GPU, or push the CPU and the neural chip (NPU) together for faster output.

And if you’re not worried about the power draw, the app also lets you separate the workload across the CPU, GPU, and the NPU simultaneously. Apple’s M-series processors come with a fairly powerful neural engine, so the best combination for running Vector is separating the workload across the CPU and NPU.

In case you have a beefier flavor of the silicon, such as the M4 Pro or M4 Max — both of which pack more GPU cores — it’s worth selecting the performance profile that also puts the GPU into the mix. Based on the chip fitted inside your Mac (and the kind of performance you are chasing), you can make a few other modifications.

To begin, you can pick between the BGE-Small model, which is just 64MB in size and runs by default. This one is powerful enough for contextual search across the local files container and messages. However, if you want better responses and support for more languages, the BGE-M3 model is where you should look.

M3, here, stands for multi-functionality, multi-linguality, and multi-granularity, which is pretty self-explanatory as far as the model’s benefits go. This one takes slightly over 1GB of space but performs much better at retrieval of contextual information and supports longer inputs worth around six thousand words (8192 tokens).

You can separately set the speed at which content in the Messages app and Files explorer is indexed. The app runs fully offline, meaning none of the data is ever stored on your Mac. But if you're still on the fence about the privacy aspect, you can separately disable indexing (and semantic search) for Messages and the files container.

From a functional perspective, Vector is extremely responsive, well-designed, and thoughtfully executed. The only area where it needs improvement is the semantic search and understanding. That’s something beyond the developer’s grasp. And it’s something that can either be fixed by fine-tuning the underlying AI model or using a smarter AI model.

For now, you can’t load an AI model of your choice. I would’ve loved to try one of Google’s Gemma series models, or those from the DeepSeek and Qwen families. Additionally, it would’ve been amazing to deploy specific AI models for certain tasks. For example, contextual image search would require a multi-modal AI for the best results.

There are already plenty of open-source models on Hugging Face that can pull it off. My experience with running SMoL-VLM2 on the iPhone 16 Pro for visual identification (even through the camera feed) was a fairly rewarding experience. Overall, if you’re looking for a low-stakes and minimally intrusive Spotlight alternative, Vector fills that gap pretty well. It’s only let down by the underlying AI brains in a few areas.

Posting Komentar untuk "I Wish Apple Made This Mac Tool – It’s Better Than Spotlight and You Should Try It"

Posting Komentar