China's AI Edge: Powering Progress with the World's Largest Grid

The Power Play in the Global AI Race

China has emerged as a formidable player in the global artificial intelligence (AI) race, leveraging its vast power grid to gain a strategic advantage. While the U.S. leads in developing advanced AI models and controls access to cutting-edge computer chips, China is capitalizing on its unique resource: affordable and abundant electricity.

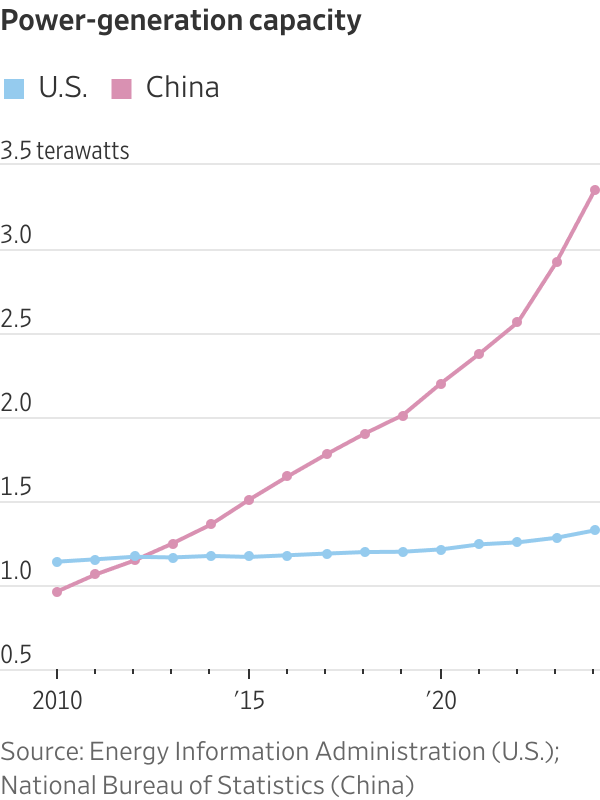

Between 2010 and 2024, China’s power production increased by more than the rest of the world combined. Last year alone, it generated more than twice as much electricity as the U.S. This massive energy output has allowed Chinese data centers to operate at significantly lower costs compared to their American counterparts. Some Chinese data centers now pay less than half what American ones do for electricity, giving them a competitive edge in AI development.

Liu Liehong, head of China’s National Data Administration, emphasized that “electricity is our competitive advantage.” This sentiment reflects the country’s broader strategy to harness its power resources for AI innovation.

The Rise of the "Cloud Valley of the Grasslands"

Remote regions like Inner Mongolia are becoming key battlegrounds in this power-driven AI competition. Known for its wide-open spaces, Inner Mongolia is now dotted with thousands of wind turbines and crisscrossed by transmission lines. These infrastructure developments support what officials call a new “cloud valley of the grasslands,” with over 100 data centers already in operation or under construction.

Morgan Stanley forecasts that China will spend $560 billion on grid projects through 2030, a 45% increase from the previous five years. Goldman Sachs predicts that by 2030, China will have about 400 gigawatts of spare capacity—three times the world’s expected data-center power demand at that time.

This push for power supremacy is not without challenges. The U.S.-China “electron gap” has become a major concern for American tech leaders. Microsoft CEO Satya Nadella has expressed worries about the company’s ability to secure enough power to run its growing number of chips. Some companies are urging Washington to streamline regulations or provide financial support to modernize America’s power grid.

The U.S. Faces an Electricity Shortfall

Morgan Stanley warns that U.S. data centers could face an electricity shortfall of 44 gigawatts in the next three years—equivalent to New York state’s summertime capacity. This poses a significant challenge for the nation’s AI ambitions.

In contrast, China’s low-cost electricity has enabled AI companies like DeepSeek to develop high-quality AI models more affordably than their U.S. competitors. It has also helped China overcome limitations in its domestic chip industry. By bundling large numbers of its own chips together, China can approach the performance of advanced chips made by Nvidia, though this process requires substantial electricity.

On Monday, President Trump announced a shift in policy, allowing U.S. chipmaker Nvidia to export its H200 chips to China. While not the company’s most advanced offering, these chips are more powerful than the best Chinese alternatives.

A Nationwide Grid Strategy

China’s power expansion is rooted in a government initiative launched in 2021 called “East Data, West Computing.” This plan aims to use the abundant power resources in western China to meet the AI-driven demand from the densely populated east. By connecting hundreds of data centers, the goal is to create a shared nationwide compute pool—a “national cloud”—by 2028.

The scale of China’s planned spending, along with underused power and data-center capacity, has raised concerns about overcapacity and potential market bubbles. However, Chinese officials believe state planning can help manage these risks.

The Growing Energy Demand of AI

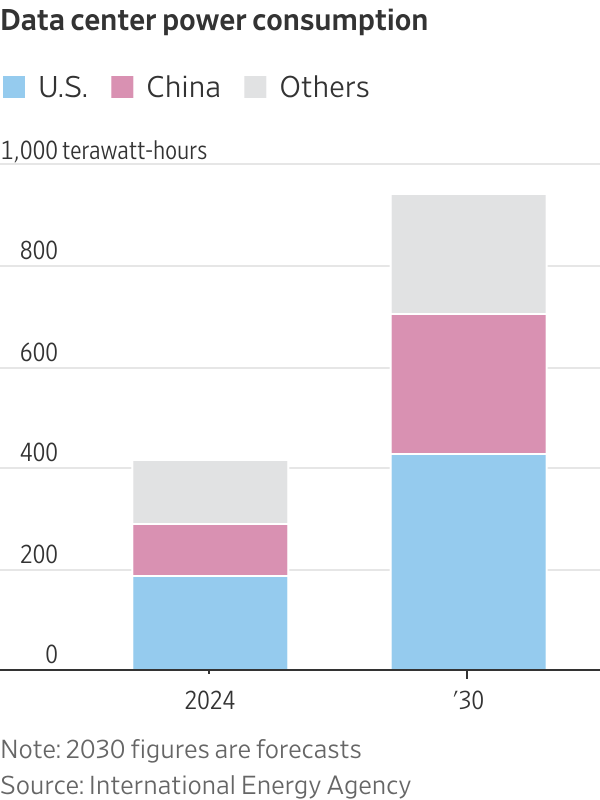

As AI continues to evolve, the energy demands of data centers are rising rapidly. Every query by a chatbot user requires power for an AI model to respond. By 2030, China’s data centers are expected to consume as much power annually as the entire electricity consumption of France.

U.S. data centers are even more energy-intensive. According to the International Energy Agency, they accounted for 45% of global data-center electricity consumption last year, compared to 25% for China.

A Legacy of Power Expansion

China’s power grid expansion dates back to the 1970s. Communist Party leaders, concerned about electricity shortages, directed state-owned companies to build coal-fired power plants. Later, they invested heavily in renewables, including hydroelectric projects, solar fields, and wind farms.

To transport power from remote locations to population centers, China built the world’s largest network of ultrahigh-voltage transmission lines. Since 2021, the country has invested over $50 billion in this infrastructure, according to state media.

Today, China has 3.75 terawatts of power-generation capacity—more than double that of the U.S. It also has 34 nuclear reactors under construction, with nearly 200 others planned or proposed. In Tibet, China is building the world’s largest hydropower project, which could produce three times the power of the Three Gorges Dam.

Cost Differences in Power

Chinese data centers can secure power for as little as 3 cents per kilowatt-hour using long-term purchase agreements, according to the National Energy Administration. In the U.S., operators in markets like northern Virginia typically pay 7 to 9 cents per kilowatt-hour, said Michael Rareshide, a partner at Site Selection Group.

Economic Strains and Policy Challenges

China’s aggressive energy spending has led to a growing debt burden. At State Grid, the state-owned grid operator, debt and other liabilities grew by over 40% from 2019 to 2024, reaching around $450 billion.

In the U.S., some tech companies are building their own power plants to support data centers. President Trump has pledged to match China’s power build-out, aiming to position the U.S. to “win the AI race while lowering energy prices and increasing grid efficiency.”

However, expanding the grid remains a challenge. The Solar Energy Industries Association recently warned that permitting policies and insufficient transmission capacity are stalling the U.S.’s AI leadership. Eighteen states, including major hosts of U.S. data centers, have over half of their planned solar and storage capacity at risk of being blocked.

The "Cloud Valley" of Ulanqab

The city of Ulanqab and neighboring Horinger County in Inner Mongolia have become key hubs in the “East Data, West Computing” program. These areas were chosen for their access to inexpensive electricity and the opportunity to bring investment to poorer regions.

Officials prioritize regulatory reviews and land acquisition for data centers in these hubs. Some data centers pay only half their electricity bills, with the rest covered by government subsidies.

Despite a population decline of a quarter between 2019 and 2024, many residents see opportunity in the region’s growing data center industry. The cool climate reduces cooling costs, and the open landscape supports solar and wind farms.

The Future of AI in China

Ulanqab’s gross regional product has grown by 50% over the past five years. From 2019 to 2024, electricity used by data centers and IT services rose by over 700%. Local authorities reported attracting $35 billion in computing-industry investments as of June.

A drive along national highway 110 reveals the rapid transformation. On one side, buildings are largely deserted, while on the other, a data center run by Centrin Data provides cloud-computing services to clients in Beijing.

Apple, Alibaba, and Huawei also have data centers in the area. Companies like XPeng use the region for training AI models and processing workloads.

Overcoming Chip Limitations

To compensate for less advanced domestic chips, Chinese companies like Huawei, Alibaba, and Baidu aim to boost computing power by bundling thousands of Chinese chips. However, this approach requires sophisticated networking technology and dispatch algorithms.

Nvidia abandoned a similar system using 256 chips due to high costs, excessive power consumption, and unreliability. Despite this, Huawei’s CloudMatrix 384 system, which bundles 384 Ascend chips, offers two-thirds more computing power than Nvidia’s flagship system containing 72 Blackwell chips. However, it consumes four times the power, according to SemiAnalysis.

Engineers who have used the Huawei system describe it as complex to install and operate. Some say it isn’t smooth enough for training large-scale AI models.

The Road Ahead

The main challenge for Chinese tech companies is the inability to produce leading-edge chips fast enough. U.S. export controls also restrict access to advanced chip-making equipment. Chinese firms have found ways to circumvent these restrictions, such as purchasing American chips through underground channels or tapping into chips in foreign data centers.

Analysts predict that shortages of high-end chips will persist for several more years. It remains unclear how many H200 chips China will buy or how much the change in export restrictions will impact the situation. China’s government has reiterated its commitment to developing its own advanced chips.

“For the near term, China’s lack of leading-edge chip capacity is a tighter constraint than the U.S.’s power bottleneck,” said Qingyuan Lin, a semiconductor analyst at Bernstein. He added that China’s power capacity keeps it in the game.

“The longer the AI race lasts,” Lin and his colleagues wrote in a report, “the more opportunities there will be for China to close the gap.”

Posting Komentar untuk "China's AI Edge: Powering Progress with the World's Largest Grid"

Posting Komentar